Background information: Fraud and error in the benefit system statistics, 2022 to 2023 estimates

Published 11 May 2023

Applies to England, Scotland and Wales

Purpose of the statistics

Context and purpose of the statistics

This document supports our main publication which contains estimates of the level of fraud and error in the benefit system in Financial Year Ending (FYE) 2023.

We measure fraud and error so we can understand the levels, trends and reasons behind it. This understanding supports decision making on what actions DWP can take to reduce the level of fraud and error in the benefit system. The National Audit Office takes account of the amount of fraud and error when they audit DWP’s accounts each year.

Within DWP these statistics are used to evaluate, develop and support fraud and error policy, strategy and operational decisions, initiatives, options and business plans through understanding the causes of fraud and error.

The fraud and error statistics published in May each year feed into the DWP accounts. The FYE 2023 estimates published in May 2023 feed into the FYE 2023 DWP annual report and accounts.

The statistics are also used within the annual HM Revenue and Customs National Insurance Fund accounts. These are available in the National Insurance Fund Accounts section of the HMRC reports page.

The fraud and error estimates are also used to answer Parliamentary Questions and Freedom of Information requests, and to inform DWP Press Office statements on fraud and error.

Limitations of the statistics

The estimates do not include reviews of every benefit each year. After a pause in reviews for most benefits in FYE 2021 due to the coronavirus (COVID-19) pandemic, we reviewed a range of benefits for FYE 2022, although we were unable to measure Personal Independence Payment. For FYE 2023 Personal Independence Payment has been measured for the first time since FYE 2020, meaning we have now returned to reviewing the range of benefits that we did before the pandemic.

This document includes further information on limitations – for example, on benefits reviewed and changes this year (sections 1 and 2), omissions to the estimates (section 3), and our sampling approach (section 4).

Longer time series comparisons may not be possible for some levels of reporting due to methodology changes. Our main publication and reference tables indicate when comparisons should not be made.

We are unable to provide sub-national estimates of fraud and error as we are unable to break the statistics down to this level.

Comparisons between the statistics

These statistics relate to the levels of fraud and error in the benefit system in Great Britain.

Social Security Scotland report the levels of fraud and error for benefit expenditure devolved to the Scottish Government within their annual report and accounts.

Northern Ireland fraud and error statistics are comparable to the Great Britain statistics within this report as their approach to collecting the measurement survey data, and calculating the estimates and confidence intervals, is very similar. Northern Ireland fraud and error in the benefit system high level statistics are published within the Department for Communities annual reports.

HM Revenue and Customs produce statistics on error and fraud in Tax Credits.

When comparing different time periods within our publication, we recommend comparing percentage rates of fraud and error rather than monetary amounts. This is because the amount of fraud and error in pounds could go up, even if the percentage rate of fraud and error stays the same or goes down, if the amount of benefit we pay out in total goes up compared to the previous year.

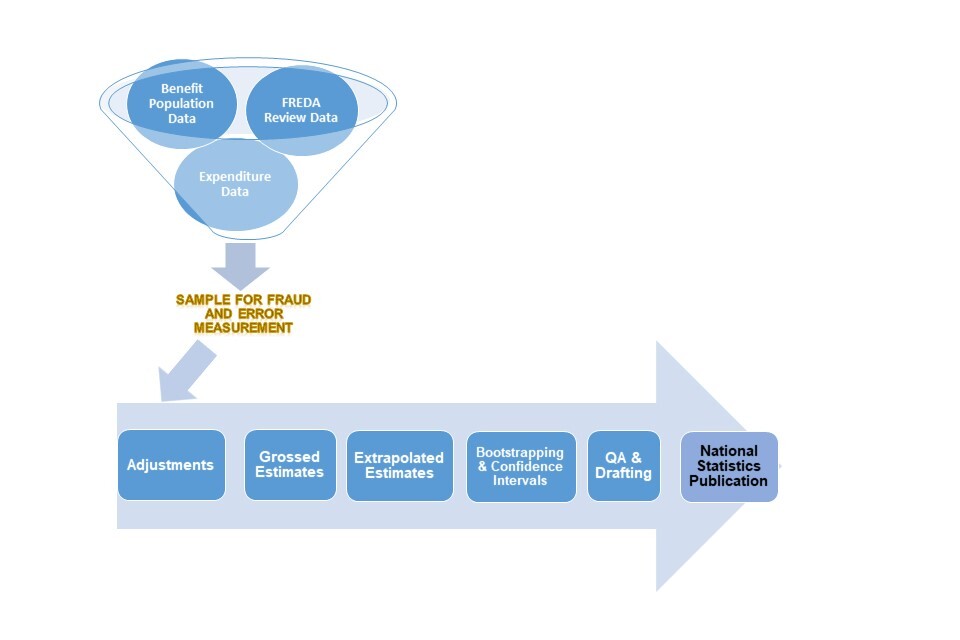

Source of the statistics

We take a sample of benefit claims from our administrative systems. DWP’s Performance Measurement (PM) team contact the benefit claimants to arrange a review. The outcomes of these reviews are recorded on a bespoke internal database called FREDA. We use data from here to produce our estimates.

We also use other data to inform our estimates – for example:

-

benefit expenditure data (aligning with the Spring Budget published forecasts)

-

benefit recovery data (DWP benefits and Housing Benefit) to allow us to calculate estimates of net loss

-

other DWP data sources and models to improve the robustness of, or categorisations within, our estimates – for example, to allow us to see if claimants who leave benefit as a consequence of the fraud and error review process then return to benefit shortly afterwards, and to understand the knock-on effect of fraud and error on disability benefits on other benefits

Further information on the data we use to produce our estimates is contained within sections 4, 5 and 6 of this report.

Definitions and terminology within the statistics

The main publication presents estimates of Fraud, Claimant Error and Official Error. The definitions for these are as follows:

-

Fraud: This includes all cases where the following three conditions apply:

-

the conditions for receipt of benefit, or the rate of benefit in payment, are not being met

-

the claimant can reasonably be expected to be aware of the effect on entitlement

-

benefit stops or reduces as a result of the review

-

-

Claimant Error: The claimant has provided inaccurate or incomplete information, failed to report a change in their circumstances, or failed to provide requested evidence, but there is no fraudulent intent on the claimant’s part

-

Official Error: Benefit has been paid incorrectly due to inaction, delay or a mistaken assessment by the DWP, a Local Authority or His Majesty’s Revenue and Customs (HMRC) to which no one outside of that department has materially contributed, regardless of whether the business unit has processed the information

We report overpayments (where we have paid people too much money), and underpayments (where we have not paid people enough money).

We present these in percentage terms (of expenditure on a benefit) and in monetary terms, in millions of pounds.

We also report two different measures on the percentage of cases with Fraud or an overpayment Error, and the percentage of cases with an underpayment Error, calculated as follows:

Proportion of claims with an overpayment or underpayment (reference tables 12 and 13)

Proportion of claims with Fraud or an overpayment error = (number of claims in the sample with at least one Fraud or at least one overpayment error) / (number of claims in the sample)

Proportion of claims with an underpayment error = (number of claims in the sample with at least one underpayment error) / (number of claims in the sample)

Since the same claim can be included in both the proportion of claims with an overpayment error or Fraud and the proportion of claims with an underpayment error, these figures cannot be summed together to obtain the total proportion of claims paid incorrectly.

Proportion of claims paid the incorrect amount (reference table 11)

Proportion of claims overpaid = (number of claims in the sample ultimately overpaid) / (number of claims in the sample)

Proportion of claims underpaid = (number of claims in the sample ultimately underpaid) / (number of claims in the sample)

These figures can be summed together to obtain the total proportion of claims paid incorrectly.

Further information about the types of errors we report on, abbreviations commonly used and statistical methodology can be found in the appendices at the end of this document.

Revisions to the statistics

Revisions to our statistics may happen for a number of reasons. When we make methodology changes that impact our estimates, we may revise the estimates for the previous year to allow meaningful comparisons between the two. Where we introduce major changes, we may denote a break in our time series and recommend that comparisons are not made back beyond a certain point.

In our FYE 2023 publication we have revised:

-

the proportion of State Pension (SP) cases with an overpayment or underpayment and the proportion of cases with a de minimis overpayment or underpayment

-

the monetary value and rate of SP overpaid

-

the monetary value and rate of Housing Benefit (HB) over and underpaid

-

error reason values for Universal Credit (UC), Employment and Support Allowance (ESA) and Pension Credit (PC)

For more information and the reason behind the revisions please see section 2

The National Statistics Code of Practice allows for revisions of figures under controlled circumstances: “Statistics are by their nature subject to error and uncertainty. Initial estimates are often systematically amended to reflect more complete information. Improvements in methodologies and systems can help to make revised series more accurate and more useful.”

Unplanned revisions of figures in reports in this series might be necessary from time to time. Under this Code of Practice, the Department has a responsibility to ensure that any revisions to existing statistics are robust and are freely available, with the same level of supporting information as new statistics.

Status of the statistics

National statistics

National Statistics status means that our statistics meet the highest standards of trustworthiness, quality, and public value, and it is our responsibility to maintain compliance with these standards.

The continued designation of these statistics as National Statistics was confirmed in December 2017 following a compliance check by the Office for Statistics Regulation. The statistics last underwent a full assessment against the Code of Practice for Statistics in February 2012. Since the latest review by the Office for Statistics Regulation, we have continued to comply with the Code of Practice for Statistics, and have made the following improvements:

-

we conducted a user consultation on the frequency of the publication, the benefits measured and on the breakdowns used within the publication. This has resulted in us changing from a bi-annual to an annual publication and beginning to measure Fraud and Error on benefits that have not been measured at all or for a long time. We measured Carer’s Allowance in FYE 2020 and Attendance Allowance in FYE 2022

-

we have made some methodological changes, resulting in better understood methodologies and assumptions, improved accuracy of the Fraud and Error statistics, and more consistency across benefits

-

we have made a number of changes to improve the relevance and accessibility of our statistics. For example, we have moved away from using the same wording and charts for all the benefits in our publication to instead focus on the key messages for each benefit, updated the categories of error we report in our publication based on user needs, and made the data from our publication available to analysts within DWP to conduct their own analysis

-

we have produced the documents in HTML format and provided an accessible version of the reference tables

Read further information about National Statistics on the UK Statistics Authority website.

Quality Statement

Quality in statistics is a measure of their ‘fitness for purpose’. The European Statistics System Dimensions of Quality provide a framework in which statisticians can assess the quality of their statistical outputs. These dimensions of quality are relevance, accuracy and reliability, timeliness, accessibility and clarity, and comparability and coherence.

Section 6 gives information on the application of these quality dimensions to our fraud and error statistics.

Feedback

We welcome any feedback on our publication. You can contact us at:

caxtonhouse.femaenquiries@dwp.gov.uk

Lead Statistician: Michael Holland

DWP Press Office: 020 3267 5144

Report Benefit Fraud: 0800 854 4400

Useful links

Landing page for the fraud and error statistics.

FYE 2023 estimates, including reference tables.

1. Introduction to our measurement system

The main statistical release and reference tables and charts provide estimates of fraud and error for benefit expenditure administered by the Department for Work and Pensions (DWP). This includes a range of benefits for which we derive estimates using different methods, as detailed below. For further details on which benefits are included in the total fraud and error estimates please see Appendix 2. More information can be found online about the benefit system and how DWP benefits are administered.

The fraud and error estimates provide estimates for the amount overpaid or underpaid in total and by benefit, broken down into the types of Fraud, Claimant Error and Official Error for benefits reviewed this year.

Estimates of fraud and error for each benefit have been derived using three different methods, depending on the frequency of their review (see section 5 for details):

Benefits reviewed this year

Fraud, Claimant Error and Official Error (see definitions above) have been measured for FYE 2023 for Universal Credit (UC), Housing Benefit (HB), Employment and Support Allowance (ESA), Pension Credit (PC), State Pension (SP) and Personal Independence Payment (PIP).

Expenditure on measured benefits accounted for 80% of all benefit expenditure in FYE 2023.

Estimates are produced by statistical analysis of data collected through annual survey exercises, in which independent specially trained staff from the Department’s Performance Measurement (PM) team review a randomly selected sample of cases for benefits reviewed this year. See section 4 for more information on the sampling process.

The review process involves the following activity:

-

previewing the case by collating information from a variety of DWP or Local Authority (LA) systems to develop an initial picture and to identify any discrepancies between information from different sources

-

interviewing the claimant (or a nominated individual where the claimant lacks capacity) using a structured and detailed set of questions about the basis of their claim. The interview is completed as a telephone review in the majority of cases. However, where this is not appropriate, there is usually also the option for a completed review form to be returned by post

-

the interview aims to identify any discrepancies between the claimant’s current circumstances and the circumstances upon which their benefit claim was based

If a suspicion of Fraud is identified, an investigation is undertaken by a trained Fraud Investigator with the aim of resolving the suspicion.

Benefits were measured with different sample periods, although all were contained within the period October 2021 to November 2022. For more information on the sample period for individual benefits please see Annex 1 of the statistical report.

The following number of benefit claims were sampled and reviewed by the PM team.

| Benefit | Sample size | Percentage of claimant population reviewed | |

|---|---|---|---|

| Universal Credit | 3,569 | 0.08% | |

| State Pension | 1,646 | 0.02% | |

| Housing Benefit | 2,978 | 0.11% | |

| Pension Credit | 1,987 | 0.14% | |

| Employment and Support Allowance | 1,997 | 0.12% | |

| Personal Independence Payment | 1,431 | 0.07% | |

| Total | 13,608 | 0.06% |

Overall, approximately 0.06% of all benefit claims in payment were reviewed by the PM team.

Read information about the Performance Measurement Team.

Benefits reviewed previously

Since 1995, the Department has carried out reviews for various benefits to estimate the level of fraud and error in a particular financial year following the same process outlined above. In FYE 2023 around 14% of total expenditure related to benefits reviewed in previous years. Please see Appendix 2 for details of benefits reviewed previously.

Benefits never reviewed

The remaining benefits, which account for around 6% of total benefit expenditure, have never been subject to a specific review. These benefits tend to have relatively low expenditure which means it is not cost effective to undertake a review. For these benefits the estimates are based on assumptions about the likely level of fraud and error (for more information please see section 5).

2. Changes to the statistics this year

This section provides detail of changes for the FYE 2023 publication. Any historical changes can be found in Appendix 5.

Revisions

Proportion of State Pension (SP) cases with an overpayment or underpayment and the proportion of cases with a de minimis overpayment or underpayment

For FYE 2022 we reported for the first time on a specific error reason in State Pension categorised as Uprating. These were reported as small value errors caused by how the Pension Strategy Computer System (PSCS) uprates the Graduated Retirement Benefit (GRB) component of State Pension.

We have continued to review our categorisation methodology for State Pension against the benefit’s regulations and legislation and we have identified that we had incorrectly recorded errors (the majority of which were 1p or 2p) on some cases. We have revised the estimates for FYE 2022 in order to allow a direct comparison to FYE 2023. The majority of these errors were reported within the Uprating category, which were reported separately from the proportion of cases in error in FYE 2022. For Overpayments the revised number of cases affected by Uprating is 11 in 100, compared to the previously published figure of 17 in 100. For Underpayments the revised number of cases affected by Uprating is 17 in 100 cases, compared to the previously published figure of 23 in 100.

The proportion of cases with an overpayment error and proportion with an underpayment error headline figures were also impacted from this issue. However, they have been revised in line with the 10p de minimis methodology change. Further information on this change is provided in section 2.

Monetary value and rate of SP overpaid

Working for a certain period in the UK means that individuals are entitled to a UK State Pension from State Pension age even if they subsequently move outside of the UK (before or after reaching State Pension age).

Reviews for State Pension only cover cases from Great Britain (GB). We apply the GB rate to the whole of the State Pension expenditure, including those cases living overseas. We also estimate an additional amount of Claimant Error for the impact of non-notification of death on State Pension cases living overseas. We see very low rates of Fraud and Claimant Error on the GB caseload and therefore it is reasonable to assume that, aside from non or late notification of death, we would find equally low rates for claimants of State Pension living overseas.

The International Pension Centre collects information on deaths of overseas State Pension claimants, but do not consistently collect any information on other changes of circumstance on these cases. This means that it is only possible to measure Fraud and Error overpayments relating to non-notification, or late notification, of death.

Two methods are used by DWP to confirm that overseas SP claimants are still alive and entitled to the benefit. These are as follows:

Life Certificates

A life certificate (LC) is a paper-based form that should be completed by the claimant, signed by a witness and then returned by the claimant. If the completed form is not returned after 16 weeks, then the claimant’s benefit is suspended and another LC is sent out, then following a further 16 weeks with no response, the claimant’s benefit is terminated. If the LC is returned by the claimant, then their benefit entitlement continues (subject to any changes in rate due to changes of circumstance reported by the claimant).

Death Exchange

The DWP exchanges death data with Spain, New Zealand, Australia, Germany, Netherlands, Malta, Poland, and the USA. Most of this data is received monthly. COVID had no impact on the DWP receiving death exchange data. The process for death exchanges begins with these countries requesting lists of claimants living in their country and receiving a UK State Pension. Following this, they send the death data for the DWP to process.

Methodology prior to FYE 2022

Prior to FYE 2022 we estimated the additional amount of Claimant Error due to non-notification of death using an estimate calculated in 2006. This was based on data from the January 2004 life certification exercise.

Currently all abroad SP claimants that are not residing in countries covered by the death exchange data are part of the LC exercise. However, in January 2004 the death data exchange was not in place and the LC exercise was conducted by selecting a sample of customers using the last number of their National Insurance number (NINO). Because the last digit of a NINO can be treated as being allocated randomly, the sample was regarded as having been selected randomly.

The LC exercise was treated as a “snapshot” for the week that the life certificates were sent out. All cases where SP had either been stopped because the customer had died prior to the life certificate exercise, or where SP had been suspended, had their amount of benefit they were receiving in that week scaled up to obtain an annual figure. A scaling factor was then applied to bring the number in the LC exercise to the number of SP cases abroad.

The results were then presented as the proportion of expenditure overpaid using expenditure figures for FYE 2004. This rate was then rolled forward and applied to each year’s SP expenditure.

Methodology for FYE 2022

For FYE 2022 we updated our methodology to generate this additional amount using the latest available life certificate and death exchange data. Last year this estimate accounted for around 40% of the total State Pension expenditure overpaid.

Methodology for FYE 2023

Whilst looking to update and refine the methodology used for FYE 2022 for this year we have found inconsistencies and gaps in the underlying data, calling into question the robustness of the data. If this had been identified last year, we would not have made the methodology change for FYE 2022 and would have rolled forward the rate that was estimated when State Pension had previously been measured in FYE 2006. Whilst we work to develop an updated estimate for the Claimant Error due to late notification of death for claimants overseas, we have reverted to using the estimated rate used prior to FYE 2022. The FYE 2022 estimate has been revised using the same methodology. The figure was originally £50m and has now been revised to be £60m.

Monetary value and rate of Housing Benefit (HB) over and underpaid

In last year’s publication, an adjustment was made to the Housing Benefit (HB) expenditure data. The expenditure data was adjusted to categorise Universal Credit (UC) as a passporting benefit because people in receipt of UC who are in supported, sheltered, or temporary housing are treated similarly to those claimants in receipt of other income-related benefits. Over the past year the methodology used to make this adjustment has been refined and an updated methodology will be used from FYE 2023 going forward. To allow consistent comparisons between FYE 2023 and FYE 2022, the FYE 2022 estimates have been revised using this methodology, resulting in some minor changes to the estimates. The total rate of overpayments for HB in FYE 2022 was 5.2% in last year’s publication, and has now been revised to 5.3%. All other changes to the estimates are increases of a similar size.

Changes

Changes to benefits reviewed

Each year we use decision making methodology called multiple-criteria decision analysis (MCDA) to help evaluate which benefits will be reviewed.

The coronavirus outbreak resulted in our normal measurements being suspended or changed in FYE 2021, and although measurements restarted for FYE 2022, PIP was still unable to be measured.

For FYE 2023 we have restarted the measurement of PIP for the first time since FYE 2020.

Proportion of claims with fraud or an error

In this year’s publication we are introducing a “net” measure of the proportion of claims with fraud or an error to supplement existing tables. This measure is consistent with the calculation of the headline Monetary Value of Fraud and Error (MVFE) and is now reported alongside MVFE in the main statistical release.

Un-netted measure of the proportion of claims with fraud or an error

We have previously published figures on the proportion of cases with an overpayment error or fraud and the proportion with an underpayment. These are calculated on the following basis:

A claim with both an overpayment error and an underpayment error is counted twice – it contributes to published table 12 for overpayments and table 13 for underpayments irrespective of its final outcome.

For example, a case is found to have two errors:

-

Error 1: Claimant Error overpayment of £75 per week

-

Error 2: Official Error underpayment of £25 per week

-

Net Error = £75 overpayment - £25 underpayment

= £50 Claimant Error overpayment per week

Error 1 is counted in the overpayment table AND Error 2 is counted in the underpayment table, despite the final outcome being an overpayment – as shown by the net outcome. This multiple counting means the two existing measures cannot be summed to find out how many cases are incorrect.

(Note that in the case of multiple overpayments only one per case is counted, and also for multiple underpayments).

Net measure of the proportion of claims with fraud or an error

In FYE 2023 we have introduced a new net measure. Using the previous example:

-

Error 1 is counted in the net overpayment estimate due to the net outcome for that case being overpayment

-

Error 2 is not counted at all as it is removed in the net calculation

Therefore, the net measure represents the number of cases that are ultimately overpaid OR underpaid. This means the overpayment and underpayment net measures can be summed to find the total proportion of claims paid incorrectly. This is published for the first time in FYE 2023 as reference Table 11.

Reasons for change:

-

to provide a net value consistent with the measure of MVFE statistics (the primary focus of the publication). This allows direct comparison and analysis across the two measures to create a more linked-up story

-

the net measure is easier to explain and understand than the un-netted measure. Supplementing existing tables with a simplified figure relating to whether a case is over OR under paid provides ease of use as well as valuable context

-

the un-netted estimates do not allow calculation of the total proportion of claims that are incorrect. This is readily available from net figures

The reference tables have been updated to report this new information; they now include the new net table (Table 11) and de minimis measures (see section below) for tables 11, 12 and 13. Alongside this, our table ordering has changed to make the table layout more cohesive and user friendly.

De minimis

Last year we took a de minimis approach to the proportion of claims paid incorrectly on State Pension, removing all uprating errors that are 10p or less, and announced we would review whether we should take the same approach across all other error types, across all benefits in future publications. Information on the original reason for this approach is available in section 2 of last year’s Background information document.

Following our review, this approach has been extended to all benefits and reasons for error. Updated figures using this methodology have also been produced for FYE 2022 and can be found in this year’s reference tables. Where the proportion of claims paid incorrectly is discussed in the publication we use headline figures, i.e. those with de minimis errors removed.

The use of a de minimis across all benefits eliminates the inconsistency of applying this approach only to State Pension. It means that when discussing the number of cases with an error, only material overpayments and underpayments are considered.

The proportion of cases with a de minimis error are also published in the reference tables and can be added to the headline figures. In Table 11 this will give the proportion of cases that have a net overpayment or net underpayment of any size. In Table 12 this will give the percentage of all cases with at least one overpayment error of any size, and in Table 13 it will give the percentage of cases with at least one underpayment error of any size. See the section above on net measure of the proportion of claims with fraud or an error for a full explanation of the difference between Table 11 versus Tables 12 and 13.

A back series of the proportion of claims paid incorrectly under the new methodology has not been produced. However, the summed total of headline and de minimis figures is comparable with the figures published in previous publications. The summing described above thus allows the new Table 12 to be compared with the old Table 5, and allows the new Table 13 to.be compared with the old Table 11. The exception is State Pension in the publication for FYE 2022, which is comparable with this year’s headline figures.

Housing Costs Capping on Universal Credit

The value of Housing Costs overpayments will now be limited (capped) to the amount of housing element in payment on a UC claim. This change has been made to more accurately reflect the amount of housing element that is overpaid on UC.

It was previously possible for Housing Costs overpayments to exceed the value of the housing element on a particular UC claim. If a claimant fails to provide the requested evidence after a Performance Measurement review, their benefit is suspended and then subsequently terminated. A whole award error is then recorded relating to the evidence that we failed to receive. If the claimant does not provide evidence of their liability for Housing Costs, then a whole award Housing Costs Fraud is recorded. It is possible that the whole award value exceeds the housing element value. The amount by which the housing element is overpaid is overstated under these circumstances.

The updated methodology caps the Housing Costs Fraud at the housing element value. Any excess (the value by which the Housing Costs Fraud exceeds the housing element) is distributed across any other whole award Fraud errors on the claim or placed in Failure to Provide Evidence/Engage.

This change does not have any impact on the headline UC overpayment rate. It only affects the allocation of Fraud across the error reasons. Housing Costs overpayments reduce since the capping of Housing Costs Fraud can only act to decrease these error values. The reduction in Housing Costs Fraud is redistributed across other error reasons, dependant on which other errors are present on the claim affected.

Making this change to the FYE 2022 estimates removed £82m of Housing Costs Fraud, with £78m moving into Failure to Provide Evidence/Engage, £4m moving into Earnings/Employment and £1m moving into Living Together.

Reclassification of Failure to Provide Evidence Fraud

If a claimant fails to provide requested evidence after a Performance Measurement review, their benefit is suspended and then subsequently terminated. A whole award error is then recorded relating to the evidence that we failed to receive (e.g., if a bank statement which was requested to verify Capital was not received, then a whole award Capital Fraud is recorded). However, if we had no real suspicions about the evidence apart from the claimant not providing it, we reclassify the whole award error into Failure to provide evidence. For more information on this please see section 5: Causal Links.

We have adopted a data driven approach to attempt to reclassify some of the failure to provide evidence errors into known reasons for Fraud. This involves looking at data we have on a case four months after classification, to see if this gives us a clearer picture of why they chose not to provide evidence. If this can be determined, we reclassify the error again from Failure to Provide into the error reason the data suggests.

This change does not affect the total amount of fraud and error we report. Making this change to the FYE 2022 figures had the biggest impact on Universal Credit. It moved £117m of Fraud from Failure to provide evidence/engage, with £93m moving into Self Employed Earnings, £14m moving into Conditions of Entitlement, and £10m moving into Living Together. All other benefits saw a much smaller movement of Fraud from the Failure to provide evidence/engage category to know reasons of Fraud.

Inclusion of an estimate of the fraud and error on Cost of Living Payments

Cost of Living Payments were made by the department for the first time in FYE 2023, see Cost of Living Guidance. These payments were to give claimants extra support during the current cost of living crisis and were paid in addition to any benefit that qualified a claimant for the payment.

Since the statistics give an estimate of fraud and error on all benefit expenditure, we have included an estimate of the amount of fraud and error associated with these payments in our publication.

Benefit reviews on Cost of Living Payments have not been carried out so an estimate has been derived. If a claimant is not eligible to receive benefit, then they would also not be eligible to receive a Cost of Living Payment. Therefore, to derive an estimate for the rate of fraud and error on these payments we have used the rate of cases that lose entitlement on the qualifying benefits (the majority of which have been measured in the current or recent years). The table below shows the benefits which make up Cost of Living Payments, the loss of entitlement rate used and when they were last measured:

| Qualifying Benefit | Loss of Entitlement Rate | Rate used | |

|---|---|---|---|

| Universal Credit | 11.40% | From 2022-23 work programme | |

| Income-related Employment and Support Allowance | 1.70% | From 2022-23 work programme | |

| Pension Credit | 3.50% | From 2022-23 work programme | |

| Personal Independence Payment | 1.90% | From 2022-23 work programme | |

| Winter Fuel Payments | 0.50% | From 2021-22 service centre measurement | |

| Attendance Allowance | 2.30% | From 2021-22 work programme | |

| Income-based Jobseeker’s Allowance | 3.30% | From 2018-19 work programme | |

| Disability Living Allowance | 1.90% | Proxy – PIP rate from 2022-23 work programme | |

| Armed Forces Independence Payments | 1.90% | Proxy – PIP rate from 2022-23 work programme | |

| Constant Attendance Allowance | 2.30% | Proxy – AA rate from the 2021-22 work programme | |

| Income Support | 3.30% | Proxy –JSA rate from 2018-19 work programme |

Cost of Living Payments were made by the department to Adult Disability Payment and Child Disability Payment claimants (which are devolved Scottish benefits). The loss of entitlement rate applied to the Cost of Living Payment expenditure associated with those benefits was the average loss of entitlement across all the benefits above. Cost of Living Payments were also made to Tax Credit claimants (administered by HMRC) and to War Pension Mobility Support claimants (administered by the Ministry of Defence). Since the department is not responsible for those payments they are excluded from our statistics (which focuses on fraud and error relating to Department for Work and Pensions expenditure).

The estimate we have used is robust, but it will omit a small amount of fraud and error which could occur directly on Cost of Living Payments. For more information on this please see the omissions part of section 3.

Since we have not carried out benefit reviews on Cost of Living Payments, the estimate is included within the unreviewed benefits section of the reference tables.

Changes to proxy benefits used for unreviewed benefits

For benefits which we have never measured we use a rate from a similar benefit we are currently measuring or have previously measured as a proxy. Alongside Cost of Living Payments being included for the first time we have carried out a full review of all the proxy benefits used in an effort to more accurately estimate the fraud and error on the unreviewed benefits.

The list below shows, for each unreviewed benefit, the old proxy, the new proxy and the rationale for the change:

Winter Fuel Payments

Old proxy: State Pension.

New proxy: Service Centre Measurement.

Rationale: Winter Fuel Payment was measured for the Social Fund Accounts in FYE 2022. Although this was not a review carried out by Performance Measurement, we are using the rate found in this exercise.

Industrial Disablement Benefit and Armed Forces Independence Payments

Old proxy: Disabled Living Allowance.

New proxy: Personal Independence Payments.

Rationale: All benefits previously using Disabled Living Allowance as a proxy have been changed to use Personal Independence Payments. Disabled Living Allowance hasn’t been reviewed for 15 years and is being phased out and replaced by Personal Independence Payments for Working Age claimants. This brings these benefits up to date, whilst still satisfying similar eligibility requirements.

Maternity Allowance and Severe Disablement Allowance

Old proxy: Employment and Support Allowance.

New proxy: Employment and Support Allowance (but only certain error reasons).

Rationale: Employment and Support Allowance has similar eligibility requirements to both benefits (on Maternity Allowance these are primarily related to recent work/National Insurance contributions). It covers the target population of working age people for Maternity Allowance and Severe Disablement Allowance cases are increasingly transferred to Employment and Support Allowance. As fraud and error on these benefits could only occur due to Abroad, Conditions of Entitlement, Earnings and Contributions, we have only taken the error rate associated with these error reasons from Employment and Support Allowance.

Widow’s Benefit/Bereavement Benefit/Bereavement Support Payments

Old proxy: Jobseeker’s Allowance.

New proxy: Employment and Support Allowance (Contributory element and certain error reasons only).

Rationale: Jobseeker’s Allowance is being replaced by Universal Credit and has not been measured for a number of years. Fraud and error on Widow’s Benefit/Bereavement Benefit/Bereavement Support Payments can only occur within Conditions of Entitlement, lack of National Insurance contributions or incorrect record of contributions. Employment and Support Allowance has these same conditions and thus potential fraud and error reasons, it is a Working Age benefit and is still being measured. Therefore, we have used the error rate associated with these three error reasons on Employment and Support Allowance.

Financial Assistance Scheme

Old proxy: State Pension.

New proxy: State Pension (Official Error rate only).

Rationale: Fraud and Claimant Error aren’t possible on Financial Assistance Scheme. Since the Financial Assistance Scheme is not means tested, we have used the Official Error rate of State Pension.

Industrial Death Benefit

Old proxy: State Pension.

New proxy: Pension Credit (Living Together rate only).

Rationale: This is a legacy benefit which was stopped in 1988. Rates would have been decided long ago, and the only room for change regards the claimant remarrying. There is not much room for error and fraud. Pension Credit fits the target population and so we have used the Living Together rate on that benefit (which would relate to remarriage).

Christmas Bonus

Old proxy: General.

New proxy: Rate of whole award errors found on the last measurement of Attendance Allowance, Carer’s Allowance, Employment and Support Allowance, Pension Credit, Personal Independence Payments and State Pension.

Rationale: The Christmas Bonus is administered at a flat rate to Attendance Allowance, Carer’s Allowance, Employment and Support Allowance, Pension Credit, Personal Independence Payments and State Pension claimants. We have used a proxy measure that looks at the proportion of claims paid incorrectly of those that lose entitlement on each of those benefits mentioned above. These rates are then applied to the expenditure split down by how much each qualifying benefit contributes. These new monetary rates are then totalled, and confidence interval derived.

Note: There are other smaller benefits that also qualify claimants for a Christmas Bonus, but these account for only a very small proportion of the Christmas Bonus expenditure. The average loss of entitlement across the qualifying benefits listed above is applied to that proportion of expenditure associated with those smaller benefits.

Cold Weather Payments

Old proxy: State Pension.

New proxy: Rate of whole award errors found on the last measurement of Employment and Support Allowance, Income Support, Jobseeker’s Allowance, Pension Credit and Universal Credit.

Rationale: Cold Weather Payments are administered at a flat rate to Employment and Support Allowance, Income Support, Jobseeker’s Allowance, Pension Credit and Universal Credit claimants. We have used a proxy measure that looks at the proportion of claims paid incorrectly of those that lose entitlement on each of those benefits. These rates are then applied to the expenditure split down by how much each qualifying benefit contributes. These new monetary rates are then totalled, and confidence interval derived.

Statutory Sick Pay and Statutory Maternity Pay

Old proxy: General.

New proxy: None.

Rationale: This benefit is very straightforward and therefore there is no real way for fraud and error to occur apart from a claimant having a collusive employer. Therefore, we are estimating this as zero.

State Pension Transfers

Old proxy: State Pension.

New proxy: State Pension.

Rationale: There is probably more room for fraud and error when transferring State Pensions overseas, as there is an extra step in the process. However, there is no other proxy suitable to solve this issue, so we have not changed the proxy.

Note: the General proxy was determined by all benefits which have ever been reviewed: In FYE 22 publication this was Income Support, Jobseeker’s Allowance, Pension Credit, Housing Benefit, Disability Living Allowance, State Pension, Carer’s Allowance, Incapacity Benefit, Employment and Support Allowance, Universal Credit, Attendance Allowance and Personal Independence Payment.

We have not revised previous year’s figures as the unreviewed benefit line should not be compared year on year due to the benefits making up this category changing over time.

3. Interpretation of the results

Care is required when interpreting the results presented in the main report:

-

the estimates are based on a random sample of the total benefit caseload and are therefore subject to statistical uncertainties. This uncertainty is quantified by the estimation of 95% confidence intervals surrounding the estimate. These 95% confidence intervals show the range within which we would expect the true value of fraud and error to lie

-

when comparing two estimates, users should take into account the confidence intervals surrounding each of the estimates. The calculation to determine whether the results are significantly different from each other is complicated and takes into account the width of the confidence intervals. We perform this robust calculation in our methodology and state in the report whether any differences between reporting years are significant or not

-

unless specifically stated within the commentary in the publication or in the reference tables, none of the changes for benefits reviewed this year are statistically significant at a 95% level of confidence when compared to the previous measurement

As well as sampling variation, there are many factors that may also impact on the reported levels of fraud and error and the time series presented.

-

these estimates are subject to statistical sampling uncertainties. All estimates are based on reviews of random samples drawn from the benefit caseloads. In any survey sampling exercise, the estimates derived from the sample may differ from what we would see if we examined the whole caseload. Further uncertainties occur due to the assumptions that have had to be made to account for incomplete or imperfect data or using older measurements

-

the sample year and the financial year do not align. This means that a proportion of expenditure for benefits reviewed this year cannot be captured by the sampling process. This is mainly because of the delay between sample selection and the interview of the claimant, and also the time taken to process new benefit claims, which excludes the newest cases from the review. The estimates in the reference tables in this release have been extrapolated to account for the newest benefit claims which are missed in the benefit reviews and cover all expenditure

-

the estimates do not encompass all fraud and error. This is because Fraud is, by its nature, a covert activity, and some suspicions of Fraud on the sample cases cannot be proven. For example, cash in hand earnings are harder to detect than those that get paid via PAYE. Complex official error can also be difficult to identify. More information on omissions can be found later in this section

-

some incorrect payments may be unavoidable. The measurement methodology will treat a case as incorrect, even where the claimant has promptly reported a change and there is only a short processing delay

Omissions from the estimates

The fraud and error estimates do not capture every possible element of fraud and error. Some cases are not reviewed due to the constraints of our sampling or reviewing regimes (or it is impractical to do so from a cost or resource perspective), some cases are out of scope of our measurement process, and some elements are very difficult for us to detect during our benefit reviews. The time period that our reviews relate to means that any operational or policy changes in the last five months of the financial year are usually not covered by our measurements.

For most omissions from our estimates, we make adjustments or apply assumptions to those cases. For some omissions we assume that the levels of fraud and error for those cases are the same as for the cases that we do review, and for other omissions we apply specific assumptions where we expect the levels of fraud and error to be different.

This section details the omissions from the estimates as far as possible. The examples that follow are not an exhaustive list but are an attempt at providing further details on known omissions in the estimates.

There are a number of groups of cases that we are unable to review or which we do not review. Some of the main examples of these are as follows:

New and short-term cases

We are unable to review short duration cases (of just a few weeks in duration) due to the time lags involved in accessing data on the benefit caseloads, drawing the samples and preparing these for reviewing. For these cases, we assume the rates of fraud and error are the same as in the rest of the benefit caseloads. We do, however, also make an adjustment using “new cases factors” to try to ensure that the results are representative across the entire distribution of lengths of benefit claims (see section 5 for further details).

It can take time for new cases to be available for sampling, meaning they are potentially under-represented in the sample. Analysis was undertaken to quantify the impact of these potential exclusions.

New cases make up a small proportion of cases for most benefits. The table below shows the yearly average percentage that are less than three months old at a given time.

| Benefit | Average number of cases less than three months old | Source | |

|---|---|---|---|

| Employment and Support Allowance | 1.70% | Official quarterly data for August 2021 to May 2022 | |

| Pension Credit | 1.50% | Official quarterly data for August 2021 to May 2022 | |

| Personal Independence Payment | 4.40% | Official monthly data for November 2021 to October 2022 | |

| Housing Benefit | 3.4% | Official monthly new case data Sept 2021 to August 2022 | |

| Universal Credit | 8.0% | Official monthly data on cases < 3 months old January 2022 to December 2022 | |

| State Pension | 2.5% | Estimated using pension and population data |

To investigate the impact of this exclusion, a simulation of the sampling process was performed and repeated multiple times with these cases included. Sensitivity analysis was then carried out across all benefits to estimate the impact of excluding new cases if the error rate were doubled, halved or remained the same.

The age of a case when it becomes available for sampling differs by benefit, for example, ESA claims must be at least 6 weeks old and HB claims at least 10 weeks. Based on these timescales, analyses were undertaken using an exclusion period of either 6 weeks or 3 months.

Overall, this analysis showed that, because short term cases make up such a small percentage of total cases at any given time and many are available to be sampled later in the period, the impact on final published figures for all benefits is negligible.

The impact of excluding new cases is no more than 0.1 percentage points difference in the estimated error rate for either underpayment or overpayment across all benefits except UC. For UC overpayments, the scenario of doubling the error rate increases the total overpayment rate by 0.5 percentage points and halving the error rate decreases it by 0.3 percentage points. The higher numbers reflect the fact that UC has a higher proportion of new cases and a higher rate of error than other benefits.

It would be expected that the rate of fraud and error in new cases would typically be lower than the full population of claimants since they have recently been assessed. The outcomes of this analysis fall within the estimated confidence intervals and there is little impact on the published statistics, therefore no adjustment is required.

Unclaimed Benefits

We are only able to sample claimants who are in receipt of a benefit payment. Eligible claimants that have not made a benefit claim are not included in these figures. Statistics on benefit take-up can be found online.

Disallowed claims

Claims which do not receive an award are not included in our sample. These claims may have been disallowed in error, resulting in a possible underpayment.

Using data on the number of disallowed claims and appeal success rates, sensitivity analysis was carried out to investigate the impact of various assumed error rates for disallowed cases.

A summary across benefits for various error rate scenarios is shown below. The worst-case scenario is not plausible and is included to illustrate that even assuming an unrealistic level of error, the adjusted estimate would still fall within or close to the published confidence intervals.

Results show that there is likely to be little to no impact on our estimate of underpayments as a result of not sampling disallowed claims.

Employment and Support Allowance (ESA)

| Level of underpayment in excluded group | Estimated change in underpayment | Impact on ESA underpayment estimate | Impact on global underpayment estimate | |

|---|---|---|---|---|

| Extreme worst case: appeal success rate applied to all disallowed claims | + £46.8m | + 0.4 p.p | + 0.0 p.p | |

| Disallowed cases have the same error rate as measured cases | + £6.7m | + 0.1 p.p | + 0.0 p.p | |

| Twice as many cases are eligible to be awarded on appeal as actually are | + £4.3m | + 0.0 p.p | + 0.0 p.p |

Personal Independence Payment (PIP)

| Level of underpayment in excluded group | Estimated change in underpayment | Impact on PIP underpayment estimate | Impact on global underpayment estimate | |

|---|---|---|---|---|

| Extreme worst case: All disallowed cases are appealed with the same success rate | + £267.1m | + 2.0 p.p | + 0.1 p.p | |

| Disallowed cases have the same error rate as measured cases | + £88.0m | + 0.6 p.p | + 0.0 p.p |

Note: PIP worst case analysis is slightly different due to its high appeal rate.

Pension Credit (PC)

| Level of underpayment in excluded group | Estimated change in underpayment | Impact on PC underpayment estimate | Impact on global underpayment estimate | |

|---|---|---|---|---|

| Extreme worst case: appeal success rate applied to all disallowed claims | + £11.3m | + 0.2 p.p | + 0.0 p.p | |

| Disallowed cases have the same error rate as measured cases | + £7.2 m | + 0.1 p.p | + 0.0 p.p | |

| Twice as many cases are eligible to be awarded on reconsideration as actually are | + £0.2m | 0.0 p.p | + 0.0 p.p |

Universal Credit

Appeals information was not available for UC therefore the sensitivity analysis could not be carried out fully as in the above tables.

Using numbers of disallowed cases, analysis shows that even if the error rate in disallowed cases was double that found in the sample, the total difference in the UC rate would be around £29 million, or 0.1% of expenditure, well within the confidence interval. Assuming the error rate was the same as found in our sample the difference would be 0.0%. Therefore, no adjustment is appropriate.

State Pension

Recent data was not available on the number of disallowed State Pension claims, however, given the data available it is expected to be very low. For the most recent year for which data on appeal rates was available (December 2021 to November 2022), applying sensitivity analysis based on a series of reasonable worst-case assumption again resulted in estimated additional underpayments of between 0.0-0.1%, with the lower being significantly more plausible.

Housing Benefit

Applications for Housing Benefit are processed by local authorities, not centrally by DWP as with the other benefits reviewed, and it was not possible to obtain numbers of disallowed cases.

Since analyses of other benefits has shown negligible impact in excluding disallowed claims, the assumption is made that the impact on Housing Benefit is also negligible.

In conclusion, no adjustments are required to the estimates to account for the exclusion of disallowed cases from the sample.

Nil payment claims

A case is considered to be nil-payment if there is a claim in place but the total award being paid is zero. These cases are not included when the sample is selected. Some benefits do not allow a nil-award, meaning all active claimants are receiving some payment.

| Nil-award allowed | No nil-award allowed |

|---|---|

| ESA | HB |

| PC | SP |

| UC | PIP |

Note: for a very small proportion of the PIP caseload (0.2%), the combination of award rates (daily living and mobility) is reported as nil-nil. Investigations suggest that award rates may be temporarily shown as nil for a short period whilst a claim review is in process, after which the new award rate is set. These cases will be monitored.

Nil-payment claims are a potential source of underpayment that is not included in the sample.

Employment Support Allowance

For the year up until May 2022, the most recent year for which data was available for analysis, the number of nil-payment claims ranged from 5.9% to 6.1%, with 6.0% being the average. Simulating the sampling process shows around 6.0% of claims that would otherwise be sampled are missed due to this. The potential impact of a different error rate in this group is a decrease of 0.1 percentage points if the error rate is half the measured rate, and an increase of 0.1 percentage points if the error rate in this group is double the measured rate.

Pension Credit

Only a very small percentage of Pension Credit claimants are in nil-payment at any given time. For the most recent year of data available this number was always below one fifth of one percent. Simulating the sampling process shows that over 99.8% of cases sampled would be the same even if the nil-payment cases were included in the group available for sampling. The potential impact of a different error rate in this group thus rounds to zero, even in a worst-case scenario of doubling the error rate in the excluded cases.

Universal Credit

For the year up until August 2022, the most recent year for which data was available for analysis, the number of nil-payment claims ranged from 11.4% to 17.0%, with 13.2% being the average. Simulating the sampling process shows around 13.2% of claims that would otherwise be sampled are missed due to this. The potential impact of a different error rate in this group is an decrease of 0.1 percentage points if the error rate is half the measured rate, and an increase of 0.1 percentage points if the error rate in this group is double the measured rate.

Exclusions specific to PIP

The monthly samples are taken from live PIP claims in advance of the scheduling of the benefit reviews. Any benefit record relating to a claimant who meets specific exclusion criteria (e.g. terminally ill, reviewed in the last three months) will not be reviewed. We assume the rates of fraud and error for these cases are the same as the rest of the PIP caseload. The potential impact of each excluded group are summarised below.

Terminally Ill cases

Terminally ill claimants make up a very small percentage of PIP claimants, typically around 1%. Sensitivity analysis was carried out to test the impact of their exclusion if the fraud and error rate on these cases were to differ by as much as double that of the sampled population.

| Level of overpayment in excluded group | Estimated change in overpayment | Impact on PIP overpayment estimate | Impact on global overpayment estimate | |

|---|---|---|---|---|

| Double the published rate | + £2.3m | 0.0 p.p | 0.0 p.p | |

| Half the published rate | - £1.1m | 0.0 p .p | 0.0 p .p |

| Level of underpayment in excluded group | Estimated change in underpayment | Impact on PIP underpayment estimate | Impact on global underpayment estimate | |

|---|---|---|---|---|

| Double the published rate | + £5.7m | 0.0 p.p | 0.0 p.p | |

| Half the published rate | - £2.9m | 0.0 p .p | 0.0 p .p |

The small proportion means the total estimated level of fraud and error would differ by less than 0.05 percentage points in the above scenarios, therefore no adjustment has been made to the statistics because of this exclusion.

Scheduled reviews

PIP awards are reviewed regularly. The period between reviews is set on an individual basis and ranges from 9 months to 10 years, with the majority of claimants having a short-term award of 0-2 years. If a claim has had a planned award review in the last 92 days, has a review ongoing or a review due in the next six weeks then it is not eligible to be sampled.

A simulation of the sampling process was performed and repeated multiple times to investigate the impact of this exclusion.

Cases with an upcoming review would be expected to have a higher propensity for Fraud or Error due to the length of time since their last review. By the same reasoning cases that have had a review completed recently would be expected to have a lower propensity for Fraud or Error.

In our statistics an assumption is made that the excluded cases are similar to those sampled and so no adjustments are made. We investigated the impact of alternative assumptions on our estimates and results are shown in the tables below.

In these scenarios it was assumed that an award that was increased after a planned review would have an underpayment had we reviewed it, and cases that were decreased or reviewed before being disallowed would have an overpayment. These proportions were taken from PIP data published via StatXplore and fed into the results below.

Two assumptions were tested for recently reviewed cases: that they have no errors, and that they have half the error rate as our sample. The tables below shows these assumptions and their impact combined with the published proportions outlined above.

Estimated impact of excluding cases with a review that is due, ongoing, or recently completed using review outcomes to estimate fraud and error rates

Assumption of zero error on excluded recently reviewed cases.

| Estimated difference (£) | Impact on PIP estimate (percentage point change) | Impact on global estimate | ||

|---|---|---|---|---|

| Overpayment | - £51.5 m | - 0.3 p.p. | + 0.0 p.p. | |

| Underpayment | + £52.4 m | + 0.3 p.p. | + 0.0 p.p. |

| Estimated difference (£) | Impact on PIP estimate (percentage point change) | Impact on global estimate | ||

|---|---|---|---|---|

| Overpayment | + £25.7 m | + 0.2 p.p. | + 0.0 p.p. | |

| Underpayment | + £130.5 m | + 0.7 p.p. | + 0.0 p.p. |

The estimated difference in the rates of overpayment or underpayment are fall well within the published confidence intervals. No adjustment has been made to the statistics because of this exclusion.

Move to Pension Credit capital risk-based verification

Due to a large increase in Pension Credit claims following the recent Pension Credit take-up campaign, the department was faced with an unprecedented demand. It was therefore decided that a simplification of procedures whilst adhering to existing legislation was needed. The department therefore changed its approach to verifying capital on Pension Credit. Previously where a claimant declared capital in excess of £10,000, verification of certain types of capital was requested, including money held in bank accounts. The department instead now takes a risk-based approach and will only verify these types of capital in exceptional circumstances.

This change to verification was introduced in August 2022. However, due to the sample year not aligning with the financial year we are potentially undercounting the impact this change would have on Pension Credit Capital fraud and error rates. We have therefore carried out some analysis on volumes of starts to Pension Credit to estimate this impact. The analysis used the number of starts to Pension Credit in each week since the verification came in, the proportion of the sample that had a capital error on Pension Credit in FYE 2022, and the average monetary amount of PC Capital fraud and error in FYE 2022. It used these figures to estimate a range in which the additional fraud and error due to the move to risk-based verifications lies. Results from our analysis showed that the overpayment range is negligible and within the confidence intervals for Pension Credit fraud and error estimates this year. Due to this, we can conclude that the impact of the change to capital verification does not require an adjustment to our estimates.

Get your State Pension

Get Your State Pension (GYSP) is a relatively new system on which claims to State Pension are recorded. It currently only includes claimants who reached state pension age on or after 6th April 2016, referred to as New State Pension (NSP) claims. The proportion of total State Pension claims on this system is approximately 8%.

No cases were selected for review from the GYSP system for FYE 2023 due to an inability to access the system for sampling purposes.

We have analysed the NSP cases included in the sample from the Pension Strategy Computer System (PSCS) to assess whether excluding claims on GYSP impacts on the rate of State Pension expenditure overpaid or underpaid.

The analysis showed that there was likely to be no impact on the rate of State Pension expenditure overpaid. There could potentially be a small difference in the underpayment rate on State Pension, with a potential reduction of 0.1 percentage points to the reported estimates.

Claimants on the GYSP system are being included within the State Pension samples that will feed into the FYE 2024 report. Given this, and the small estimated impact, we have taken the decision not to make an adjustment to the State Pension rate for this year’s release, and have assumed the rate of error found on the GYSP cases is the same as the rate for cases on PSCS for FYE 2023.

Fraud and error occurring directly on Cost of Living Payments

The estimate for Cost of Living Payments only includes fraud and error on the payments where the qualifying benefit was incorrectly paid. This means that we are omitting a small amount of fraud and error that can occur on the payment themselves. These include:

- a small number of Cost of Living Payments which were paid in error to the wrong person

- a number of claims that were incorrectly not paid Cost of Living Payments

The department estimates that the amount overpaid directly on Cost of Living Payments was small and well within the confidence intervals in FYE 2023. The amount underpaid directly on Cost of Living Payments would be negligible. Mop-up exercises are carried out on the cases incorrectly not paid, as soon as they are identified, and these cases are then paid at a later date.

Time Lags

The time lags involved in the fraud and error measurement process mean that further omissions are possible. Any policy or operational changes in the last five months of the financial year will not usually be covered by the reviews feeding into the publication, as the reviews tend to finish in the October of that financial year. In addition, some cases do not have a categorisation by the time the estimates are put together, often due to an ongoing fraud investigation. “Estimated outcomes” are generated for these cases for the purposes of the statistics, made by the review officer estimating the most likely outcome of the case, or based on the results from the reviews of similar cases that have been completed.

For all benefits we carried out additional work to better understand any implications of major policy/operational changes within the financial year. The conclusion was that we felt the sample period was representative of the financial year. See section 5 for further details.

Work Capability Assessment

Measurement of ESA was first included in the FYE 2014 estimates, and followed the existing methodology for JSA, IS and PC, reviewing the financial side of a claimant’s circumstances. However, the measurement of ESA does not include a review of the Work Capability Assessment; this is also the case for the Work Capability Assessment for claimants on UC.

Universal Credit Transitional Protection

Universal Credit (UC) was introduced to replace older (legacy) benefits, including tax credits. Benefit claimants have gradually moved onto UC through:

-

natural migration - when the claimant experiences a change in circumstances, and they need to make a new claim for a benefit that UC has replaced

-

voluntary migration - when the claimant voluntarily moves to UC from their existing benefit

-

managed migration - when the claimant does not choose to migrate voluntarily and has not migrated naturally

Transitional protection can be applied to claimants who are moved onto UC through the managed migration process. A transitional protection element is applied to ensure that eligible households, with a lower calculated award in UC than their legacy benefit awards, will see no difference in their entitlement at the time they are moved to UC, providing that there is no change in their circumstances during the migration process.

The transitional protection element is calculated during the managed migration process. It is based on the circumstances for the eligible household and their legacy benefits in payment that are being replaced by UC.

There is potential for the transitional protection element to be paid incorrectly if the calculation is made incorrectly and/or the legacy benefit awards in payment are incorrect based on the claimant’s circumstances. We are unable to review the transitional protection element calculation or the legacy benefit awards because these can be derived from benefits not administered by DWP. Consequently, fraud and error on this element of UC is omitted from the estimates.

A negligible number of all UC claims in FYE 2023 have been through the managed migration process. The transitional protection element does not apply to all of these claims. When it does apply, it accounts for a fraction of the total UC award in payment. Expenditure on the transitional protection element was therefore low in FYE 2023. The omission was taken to be negligible, and no adjustment was made to the UC fraud and error estimates.

Knock-on impact on other benefits

We only review the benefit that has been selected for a review, and do not assess any consequential impacts on other benefits. However, in certain circumstances, for some benefits, there may be a knock-on impact on other benefits. An example of this is how changes in entitlement to DLA or PIP affects disability and carer premiums on income-related benefits (specifically IS, PC and HB), as well as CA. We account for this in our estimates by using DWP’s Policy Simulation Model to assess the impact. The Policy Simulation Model is the main micro-simulation model used by DWP to analyse policy changes and is based on the annual Family Resources Survey.

Third party deductions

The accuracy of third party deductions is not measured (i.e. whether the deduction is at the correct amount and is still appropriate). Third party deductions can take place to cover arrears for things like housing charges, fuel and water bills, Council Tax and child maintenance. The rate of benefit is not impacted by any third party deductions, and the amount of any Fraud or Error is based on the “gross” amount of benefit in pay.

UC sanctions

For UC, we do not assess whether the Department follows correct “labour market” procedures and takes any necessary follow up action for non-compliance by claimants (i.e. considers whether a sanction should apply if a claimant fails to apply for a job or leaves a job voluntarily). However, if a sanction decision has already been made when we review a case, then we do assess whether the impact this has on the benefit award is correct.

UC surplus self-employed profit/loss

For UC, we only measure income in the assessment period we are checking. Self-employed people must report their income on a monthly basis. If they receive income that removes their entitlement to UC in one month, and this is above the surplus earnings threshold, then any extra income is carried forward into the next month (the surplus earnings threshold is defined as £2,500 above the amount that removes their entitlement to UC in that month). If a self-employed claimant incurs a loss of any amount within an assessment period, this loss is also rolled forward to the next assessment period. When reviewing the benefit, any rolled forward income or loss is assumed to be correct.

Earnings from the hidden economy

These are claimants who are working but are not declaring those earnings to the government. For every means tested benefit, where capital and earnings affect the award, we require bank statements for all the claimant’s accounts that cover the period of the payment we are checking. This means that any earnings which goes through the claimant’s bank are likely to be picked up when those bank statements are checked.

Although we think we capture most of the Fraud related to hidden economy earnings, it is likely that not all of this would end up under the “Earnings/Employment” error reason. If the claimant fails to send in bank statements after multiple prompts, then their benefit is suspended and ultimately terminated. In these circumstances a whole award Fraud would be recorded (see the Causal Link part of section 5 for more information). However, given they were hiding their earnings from the Government it is likely we would not know the underlying reason so the Fraud would be categorised as “Failure to provide evidence/engage”.

This means that the only earnings we would not pick up are those which are only “cash in hand” and those earnings are not depositing into a bank account. We expect the impact of this to be minimal, particularly since COVID-19, many cash only businesses have diversified into accepting bank transfers/card which further reduces this omission.

Cyber-crime

We do find errors relating to this and they would be included within the “Conditions of Entitlement” error reason. The benefit reviews that underpin the statistics are very robust and encompass not only a lengthy interview with the claimant but also evidence to verify all their circumstances. Therefore, it would be very difficult for a fraudulent claimant to meet all these requirements without alerting the suspicions of the reviewing officer.

Similar to hidden economy earnings, we think we capture most of the Fraud related to cyber-crime, but it is likely that not all of this would end up under the “Conditions of Entitlement” error reason. If a claim is fraudulent then they are likely to either not attempt the interview or not provide the requested evidence, in which case a whole award Fraud would be recorded (see Causal Link part of section 5 for more information). However, it is likely that we would have no evidence as to why they did this so the Fraud would be categorised as “Failure to provide evidence/engage”.

Benefit Advances

One of the largest current omissions from our estimates is benefit advances, which are out of scope of our measurement.

UC supports those who are on a low income or out of work. It includes a monthly payment to help with living costs. If a claim is made to UC but the claimant is unable to manage financially until their first payment, they may be able to get a UC Advance, which is then deducted a bit at a time from future payments of the benefit.

The benefit review process for the fraud and error statistics examines cases where benefit is in payment. A benefit advance is not a benefit payment and is not included in the DWP expenditure figures or in our measurement process. Claimants who progress to receive payment of a benefit will be included within the scope of our measurement, but we will only review the existing benefit payment. This will not examine Fraud or Error that may have existed in any prior benefit advance payment. Claimants who only receive a benefit advance, but do not go on to receive a subsequent benefit payment, will not be included within the measurement. Advances are available for a number of benefits but, for FYE 2023, advances for UC constituted the vast majority of expenditure on benefit advances.

We estimate that for FYE 2023 the monetary value of fraud and error on UC advances lies between £10m and £80m.

Rounding policy

In the publication and reference tables, the following rounding conventions have been applied:

-

percentages are rounded to the nearest 0.1%

-

expenditure values are rounded to the nearest £100 million

-

headline monetary estimates are rounded to the nearest £10 million

-

monetary estimates for error reasons are rounded to the nearest £1 million

The proportion of claims paid incorrectly is rounded to the nearest 1% in the publication and expressed in the format “n in 100 cases”. The reference tables present the same values as a percentage rounded to the nearest 0.1%.

Individual figures have been rounded independently, so the sum of component items do not necessarily equal the totals shown.

4. Sampling and Data Collection

The fraud and error statistics are determined using a sample of benefit records, since is it not possible to review every benefit record. The sample of benefit records provide data from which inferences are made about the fraud and error levels in the whole benefit claimant population.

The number of benefit records to be reviewed is determined by a sample size calculation. The sample size calculation is used to ensure that a sufficient number of benefit records are sampled, which allows meaningful changes in the levels of fraud and error to be detected for the whole benefit claimant population.

Benefit records are selected on a monthly basis from data extracts of the administrative systems. The population from which the samples are drawn are the benefit records that are in payment in a particular assessment period, that is where there is evidence of a payment relating to the previous month. This is known as the liveload.

The monthly samples are taken from the liveload in advance of the scheduling of the benefit reviews, to give time for the sample to be checked and for background information to be gathered on each benefit record sampled. Any benefit record relating to a claimant who has been previously sampled in the last 6 months, or meets specific exclusion criteria (for example, terminally ill) will not be reviewed.

We use Simple Random Sampling to select the sample of benefit records for each benefit that is reviewed in the current year. Benefit records are sampled randomly to ensure an equal chance of being selected for the sample.

The sampling methodology is used to attempt to minimise selection bias in the sample and aims to select a sample that is representative of the entire benefit claimant case population.

The benefits sampled for this year and the methodologies applied are as follows:

Simple random sample:

-

Employment and Support Allowance

-

Pension Credit

-

Universal Credit

-

State Pension

-

Personal Independence Payment

Housing benefit methodology was simple random sampling stratified by PSU and four different client groups:

-

Working Age in receipt of IS, JSA, ESA, PC or UC

-

Working Age not in receipt of IS, JSA, ESA, PC or UC

-

Pensioners in receipt of IS, JSA, ESA, PC or UC

-

Pensioners not in receipt of IS, JSA, ESA, PC or UC